For me personally the most interesting feature in Veeam v7 would be the backup copy job. Why? Well it solves one of the most important challenges Veeam users were having with backup policies.

First of all you can now do tiering of your backups. Start by creating fast backups on fast disks with a limited amount of restore points, then use the backup copy job to copy the data to slower disks for a longer retention. Before this was also possible ... with scripts!

Second of all, you can now apply GFS like retention policies. I like that GFS is only available on the backup copy job. It forces people to think about tiering in combination with GFS to slow disks, so that you still have a (limited) amount of fast restore points to do Instant VM Recovery and Surebackup. Before this was also possible ... with scripts!

Lastly WAN acceleration is now built into the product. People were trying to get there backups off-site with previous versions but maybe not always in the most successful way. People were using of course scripts to RSYNC & Robocopy to get the backups shipped to a second location but didn't always liked how much bandwidth this required or the manual actions they had to take. Now you can get your backups off-site via very small connections. Best of all, you no longer require somebody to manually export the tapes and take them home everyday so that your corporate data is safe.

But what about those off-site copies? Getting your restore points off-site is the easy part. You can just use the backup copy job. Actually there are 2 ways you can get your backups of site.

In the push strategy, you will install the Veeam B&R Management Server on the source location. Backups will be made by a source proxy to a source repository. Then the backup copy job will send the data to the remote location. This is mostly used when your IT staff is working on the source location. One disadvantage about this scenario is that you can not share WAN accelerators between management servers in v7. Since every location is running their own Management Server, you will have to install multiple Windows servers for each WAN accelerator instance. However, connection outages won't result in backups not running locally.

In the pull strategy, you will install the Veeam B&R Management Server on the remote location (or HQ in a ROBO design). You will have a source proxy and a source repository. Local backups will be made locally but scheduled by the remote location. The backup copy job will then copy the data to the remote location. This scenario is mostly used when you have centralized IT and you have very stable connections between HQ and your branch offices. In this case, because the Management Server is running centrally, you don't need to deploy a windows server for each pair of WAN accelerators. In fact, you can profit from the fact that if you set up a new WAN accelerator pair, the server will copy the cache from an existing WAN accelerator on HQ.

In the push strategy your local and remote backups will be visible as restore points at the source side. This is is good when you want to do local restores. However what if you want to do a file level recovery at the remote location? In this case you could have a clean install of the backup management server and import the backups there.

In the pull strategy restoring at the remote side or HQ is easy. However restoring locally is hard because you will need a clean install of the backup management server and import the backup locally just to do a file level recovery.

How to solve it? Well actually in both scenario I would start by installing a local empty management server. Please don't forget to install the Powershell SnapIn as well, as we need to do the autoimport. From a licensing perspective, you can reuse your license because this empty install won't be backing up any VM's and so you won't consume any sockets. Notice that both Veeam servers should be running the same version or at least the server importing should be newer or the same level as the one creating the backups.

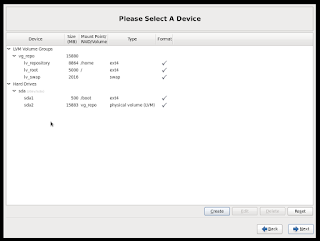

Once you installed the empty server you can start by adding the repository directory as a new repository on the clean installed server. When you add the repository give it a name but start with the prefix "WANBCJ". Alternativally you can alter the script I am providing you later.

In the last step you can then click to automatically import existing backups

After this is done you should see your backups appear as "imported" and you can start FLR or Instant VM Recovery easily via the regular way.

One thing that won't happen however is that your repository will be automatically for new restore points. So if a couple of weeks later, you need to do a restore of a freshly copied restore point, you will have to manually rescan your repository. It's quite easy to do this. Just go to the repository in backup infrastructure, right click it and choose rescan

Well now here is the fun part. You can easily automate this. Just open up a Powershell window, by going to the main menu (it's the blue button in the top left corner of the GUI)

Ok so the basic script is actually one line of code:

Now lets make this code automated. The easiest way on various platforms is just to create a ps1 file and add the following code to it:

Now in the Windows task scheduler you can schedule a new task to run this script on a daily basis (or more frequently if your prefer). You can see the program is "powershell" and the argument is the path to the script enclosed with quote signs.

If you want to test that it works, just hit the run button and see if you can see the event in the history tab.

The nice thing about this script is that if you add another repository which names starts with WANBCJ, you won't have to do anything as it will be automatically rescanned!

First of all you can now do tiering of your backups. Start by creating fast backups on fast disks with a limited amount of restore points, then use the backup copy job to copy the data to slower disks for a longer retention. Before this was also possible ... with scripts!

Second of all, you can now apply GFS like retention policies. I like that GFS is only available on the backup copy job. It forces people to think about tiering in combination with GFS to slow disks, so that you still have a (limited) amount of fast restore points to do Instant VM Recovery and Surebackup. Before this was also possible ... with scripts!

Lastly WAN acceleration is now built into the product. People were trying to get there backups off-site with previous versions but maybe not always in the most successful way. People were using of course scripts to RSYNC & Robocopy to get the backups shipped to a second location but didn't always liked how much bandwidth this required or the manual actions they had to take. Now you can get your backups off-site via very small connections. Best of all, you no longer require somebody to manually export the tapes and take them home everyday so that your corporate data is safe.

But what about those off-site copies? Getting your restore points off-site is the easy part. You can just use the backup copy job. Actually there are 2 ways you can get your backups of site.

In the push strategy, you will install the Veeam B&R Management Server on the source location. Backups will be made by a source proxy to a source repository. Then the backup copy job will send the data to the remote location. This is mostly used when your IT staff is working on the source location. One disadvantage about this scenario is that you can not share WAN accelerators between management servers in v7. Since every location is running their own Management Server, you will have to install multiple Windows servers for each WAN accelerator instance. However, connection outages won't result in backups not running locally.

In the pull strategy, you will install the Veeam B&R Management Server on the remote location (or HQ in a ROBO design). You will have a source proxy and a source repository. Local backups will be made locally but scheduled by the remote location. The backup copy job will then copy the data to the remote location. This scenario is mostly used when you have centralized IT and you have very stable connections between HQ and your branch offices. In this case, because the Management Server is running centrally, you don't need to deploy a windows server for each pair of WAN accelerators. In fact, you can profit from the fact that if you set up a new WAN accelerator pair, the server will copy the cache from an existing WAN accelerator on HQ.

In the push strategy your local and remote backups will be visible as restore points at the source side. This is is good when you want to do local restores. However what if you want to do a file level recovery at the remote location? In this case you could have a clean install of the backup management server and import the backups there.

In the pull strategy restoring at the remote side or HQ is easy. However restoring locally is hard because you will need a clean install of the backup management server and import the backup locally just to do a file level recovery.

How to solve it? Well actually in both scenario I would start by installing a local empty management server. Please don't forget to install the Powershell SnapIn as well, as we need to do the autoimport. From a licensing perspective, you can reuse your license because this empty install won't be backing up any VM's and so you won't consume any sockets. Notice that both Veeam servers should be running the same version or at least the server importing should be newer or the same level as the one creating the backups.

Once you installed the empty server you can start by adding the repository directory as a new repository on the clean installed server. When you add the repository give it a name but start with the prefix "WANBCJ". Alternativally you can alter the script I am providing you later.

In the last step you can then click to automatically import existing backups

After this is done you should see your backups appear as "imported" and you can start FLR or Instant VM Recovery easily via the regular way.

One thing that won't happen however is that your repository will be automatically for new restore points. So if a couple of weeks later, you need to do a restore of a freshly copied restore point, you will have to manually rescan your repository. It's quite easy to do this. Just go to the repository in backup infrastructure, right click it and choose rescan

Well now here is the fun part. You can easily automate this. Just open up a Powershell window, by going to the main menu (it's the blue button in the top left corner of the GUI)

Ok so the basic script is actually one line of code:

Get-VBRBackupRepository | where { $_.name -match "^WANBCJ" } | ForEach-Object { Sync-VBRBackupRepository -Repository $_ | out-null}You can see why WANBCJ is required as a prefix as the code will match any repository where the name start with WANBCJ. Then for each of these repository we will ask a resync. You can see the result poping up in the history tab

Now lets make this code automated. The easiest way on various platforms is just to create a ps1 file and add the following code to it:

Add-PSSnapin -Name "VeeamPSSnapin"Notice that there is some added code that will load the VeeamPSSnapin. When you trigger powershell via the Veeam menu it is done automatically. However, the task scheduler of windows won't do it for you so you have to do it manually. In my case I have saved the script under c:\vbrscripts\syncrepo.ps1

Get-VBRBackupRepository | where { $_.name -match "^WANBCJ" } | ForEach-Object { Sync-VBRBackupRepository -Repository $_ | out-null}

Now in the Windows task scheduler you can schedule a new task to run this script on a daily basis (or more frequently if your prefer). You can see the program is "powershell" and the argument is the path to the script enclosed with quote signs.

If you want to test that it works, just hit the run button and see if you can see the event in the history tab.

The nice thing about this script is that if you add another repository which names starts with WANBCJ, you won't have to do anything as it will be automatically rescanned!