It is always great if vendors announce new features because most likely they are solving issue existing customers have. One of the better features Veeam released is Backup from Storage Snapshots. In v7, this feature supports HP Storeserv and HP Storevirtual storage platforms. As recently announced, in v8 this feature will be extended to Netapp.

But what problem does it really solve? When I am talking to customers, I have two kinds of customers: the ones that have the actual problem and the ones that don't. You can easily recognize them, cause if you have one of the first category, they immediately say : "We need this!".

So let's look at the problem first, and then explain how Backup from Storage Snapshots (BfSS) works.

With the introduction of Virtualisation, there are actually more layers that have to be made consistent before you can make a backup. In the old days, it was just the application, operating system and then the hardware (SAN) underneath that. Now, a new layer has been introduced: the Virtualisation layer itself.

Since Veeam backups at the VM level, it makes sense to take into account this layer. The way Veeam does it, is by taking VM snapshots (for VMware). To make everything consistent in the VM there are a couple of possibilities :

- Use Veeam Application Aware Image Processing: basically talk to VSS directly via a runtime component. If necessary, it can also truncate logs for Exchange or SQL

- Use VMware Tools: For Windows it will also do a (filesystem level) integration with VSS. For other platforms (or if you prefer), you can use pre-snapshot/post-snapshot scripts.

Once everything is consistent in the VM, Veeam then triggers a VMware snapshot. When that snapshot is created, everything can be released in the guest because you have a "consistent photo" of your VM. But what happens underneath?

Before the snapshot is created, the VM is happily reading and writing to the VMDK

After a snapshot has been created, VMware will create a delta disk. This disk will be very small in the beginning. However, while the snapshot exists (and thus the delta disk exists), the writes are redirected to this delta volume. The great advantage is that only reads will be done from the original VMDK if the blocks have not been overwritten. This means that we can backup the original VMDK knowing that it is in a consistent state and won't be altered during backup

Important, VMware snapshots are not "transaction logs". If a block is updated for a second time, the block in the spare disk will be updated thus not taking extra space. That means the delta can maximally grow to the size of the original VMDK.

Well so far, so good. But what is the problem with this?

If you have a not so I/O active VM, there is not really a problem. Because of change block tracking feature, Veeam only has to backup the blocks that have been changed between back-ups. That means fast backups and due to the low I/O, the delta won't grow so fast.

But what if you have an I/O active VM. Well then you have a couple of problems. First of all, your snapshots will grow with 16MB extents (or at least that is what I could find on the net). But everytime it grows, it needs to lock the clustered volume (VMFS) to allocate more space for the VMDK (Meta updates). That means extra I/O will be needed but also possible impact on other VMs that run on the same volume due to these locks. This problem also occurs with thin provisioning.

Secondly, if you are using thin provisioned VMFS volumes, the VMFS volumes will consume more and more space on the SAN. When you delete the snapshot, that space won't be automatically reclaimed. VMware now support the UNMAP VAAI primitive but as far as I know, it is not an automatic process:

http://cormachogan.com/2013/11/27/vsphere-5-5-storage-enhancements-part-4-unmap/

Finally because it is an I/O active VM, it probably has changed a lot of blocks between backups meaning that the VM backup might take long.

So if you could reduce the time the snapshot is active, the snapshot won't have the chance to grow that big. You might not avoid the problems completely but at least the impact will be a lot smaller.

But it can get worse. What happens when you delete (commit) the snapshot? Of course your data is not just discarded but need to be re-applied to the original volume. However, writes are still being done to that snapshot, so you can not just start merging. Because what happens to a block you are committing back and updating at the same time? Well for that VM uses a consolidated helper snapshots.

Basically VMware creates a second snapshot. All writes will be redirected to this helper. Then you can start committing the data back to the original VMDK.

Once that is done, the hope is of course that "the consolidated helper" snapshot is smaller then the original snapshot. So for example, if backup time took 4 hours, the hope is that consolidating only took for example 10 minutes, meaning that the snapshot might only be a fraction of the original snapshot.

What is important is to notice is that, the bigger the snapshot, the more extra I/O will be generated during commit. You need to read the blocks from the snapshot and then overwrite them in the original VMDK. That means that during a commit, you might notice a performance impact on the volume and thus on your original VM as well.

But what happens after that commit? You are left with consolidated helper, so you need to commit that. In 3.5, VMware just frozes the VM (holding off all I/O), and commited the delta file to the VMDK (call a sync commit). That means you can have huge freeze times (stuns). At one point, VMware improved this process by creating additional helper snapshots and going through the same process over and over again until it feels confident that it can commits the snapshots in a small time.

There are actually 2 parameters that impact this process.

- snapshot.maxIterations : How many times will we repeat this process of creating helper snapshots and committing them. After all iterations are over, the VM will be stunned anyway and the commit will be forced. By default, VMware will go through 10 iterations max

- snapshot.maxConsolidateTime : What is the estimated time VMware can stun your VM. The default is 6 seconds. For example, if after 3 iterations, VMware is confident it can commit the block of the helper snapshots in less then 6 seconds, it will freeze all I/O (stun), commit the snapshot, continue I/O (unstun) and not go through any additional iterations.

http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2039754

So if you are running I/O intensive program, the impact might be huge if you have to go to several iterations. Also imagine that instead of getting smaller consolidaton helpers, you will get bigger helpers after several iteration, the stun time might become huge instead of smaller. In the KB article there is an example that if you start with 5 minutes stun time, you might actually end up with 30 minutes stun time.

As a side note, I have to thank my colleague

Andreas for pointing us to these parameters. While they where undocumented back then, they helped me find to find the info I needed. His article describes the process of lowering Max Consolidate Time

for Exchange DAG Cluster. Granted VMware might go to additional

iterations but the result might be that the stun time will be smaller

thus not causing any failover. Like he suggest as well, only do this

together with support. If your I/O is too high, you might actually

amplify the problem as described above.

Conclusion is that, if you keep the delta file small, commit will be much faster, will have to go to a lot less iterations and stun time might be minimized (even if you go through max iterations).

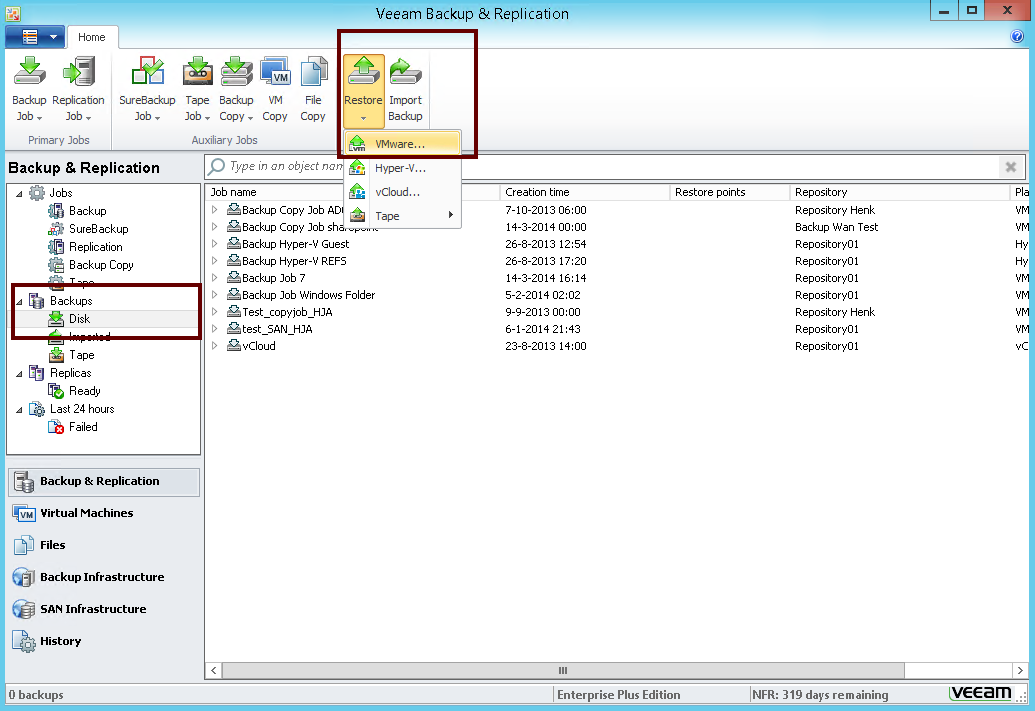

So how does BfSS helps then? Well, when you use BfSS, a storage snapshot will be created after the snapshot on VM level is created. That means you can then instantly delete the snapshot on a VM level.

So as you can see the start is the same.

But then you create a snapshot by talking to the SAN/NAS devices that is hosting the VMFS volume / NFS share . This means your VM snapshot is "included" in the SAN snapshot, and this allows you to instantly commit the snapshot on the VM level

Afterwards the Veeam Backup & Replication proxy can read the data directly via the storage controller. Granted, Veeam will still create a snapshot, but you can imagine that a delta of 2 minutes while be 100x times smaller than a delta of 3 hours.

Sometimes customers will ask me if you are not shifting the problem. From a thin provisioning perspective of course not because the SAN is aware of the blocks it deletes. From a performance impact, SAN arrays are designed to do this. In fact, snapshots are NetApp bread and butter. They just redirect pointer, so deleting a snapshot is just throwing away the pointers. So no nasty commit times there.

But there is another bonus with storage snapshots that will be exclusively available for Netapp. VMware has still not solved the stun-problem that you can have with VMs hosted on

NFS volumes when using Hot-Add backup mode. Backup & Replication has a

way around this, but still it requires you to deploy a proxy on each host.

With BfSS, v8 while also implement an NFS client in the proxy component for Netapp. That means, even though you use NFS, you can use a "Direct SAN" approach (or as I like to call it Direct NAS). First of all it means you won't have those nasty stuns but more importantly, you will read the data where it resides. That means no extra overhead on the ESXi side (no CPU/MEM needed!) when you are running your backups.

So although demoing this feature might not look impressive (unless you have this problem of course), you can see that it a major feature that has been added to Veeam Backup & Replication. The impact of making backups on I/O intensive VM will be drastically lower, allowing you to do more backups and thus yielding a better RPO.

*Edit* I also found that VMware has added a new parameters in one of its

patches, but what snapshot.asyncConsolidate.forceSync = "FALSE" does is not described.

.png)