In this blog post I'll show you how you can play around with Veeam RESTful API via Powershell. This post will show you how to find a job and start it. You might wonder why would you do such a thing? Well in my case it is to showcase the interaction with the API (per line of code), very similar as you with do with wget or curl. If you want an interactive way of playing with the api, know that you can always replace the /api with /web/#api/ (for example http://localhost:9399/web/#/api/) to get an interactive browser. However, via Powershell you get the real sense that you are interacting with the API and all methods used here should be portable to any other language. That is why I've not chosen to use "invoke-restmethod", but rather a raw HTTP call.

So the first thing (which might not be required), is to ignore the self signed certificate. If you access the API via FQDN on the server itself, the certificate should be trusted, but that would make my code less generic.

Under the root container (EnterpriseManager), we have a list of all SupportedVersion. By applying a filter we get the v1_2 (v9) API version. This one has one Link which indicates how you can logon

Most of the times:

Ok for authentication, we have to do something special, we need to send the credentials via basic authentication. This is a pure HTTP standard, so I'll show you two ways to do it

The result is that (if we logged on successfully), we get a logon session, which has links to almost all main resources. Before we go any farther, we do need to analyze a bit the response.

The next thing now is to extract the Rest session id. Instead of sending over the username and password the whole time, you need to send over this header to authenticate. The header is returned after you succesfully logged in.

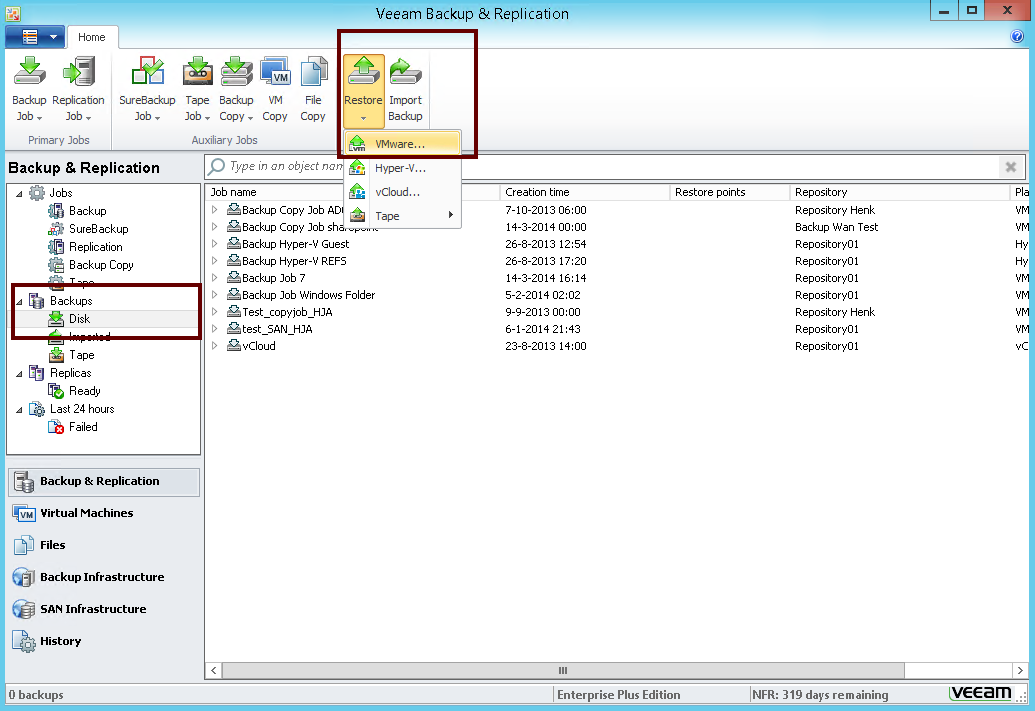

So why the reference representation? Well it is a pretty similar concept to the GUI. If you open the Backup & Replication GUI and you select the job list, you don't get the complete details of all the jobs. That would be kind of overwhelming. But when you click a specific job and try to edit it, you get all the details. Similar, if you want to built an overview of all the jobs, you wouldn't want the API to give you all the unnecessary details as this would make the "processing time" of the request much bigger (downloading the data, parsing it, extracting what you need, ..)

So in the 3rd line, what we do is look for the job (or rather the reference to a job), which names matches "myjob". We then take a look at the links of this job and look for the alternate link. Basically, this is the jobid + "?format=Entity" to get the complete details of the job. Here is the the output of $r_job_alt

So in this example, we check if the task is "Running", we will refresh the task and write out the State, sleep 1 second, and then do the whole thing again while it is in "Running" state. Once it is finished, we write out the Task.Result. Again, if this task is Finished, it does not mean the job is finished, but the backup server has "started" the job hopefully succefully

So finally we need to log out. That rather easy. In the logon session, you will find the URL to do so. Since you are logging out or deleting your session, you need to use the method "DELETE". You can check that by checking the relationship of the link.

https://github.com/tdewin/veeampowershell/blob/master/restdemo.ps1

There is some more code in that example that does the actual follow up on the process. But you can skip or analyze the code if you want.

So the first thing (which might not be required), is to ignore the self signed certificate. If you access the API via FQDN on the server itself, the certificate should be trusted, but that would make my code less generic.

add-type @"So with that code executed, you have told dotnet to thrust everything. Next step is to get the API version

using System.Net;

using System.Security.Cryptography.X509Certificates;

public class TrustAllCertsPolicy : ICertificatePolicy {

public bool CheckValidationResult(

ServicePoint srvPoint, X509Certificate certificate,

WebRequest request, int certificateProblem) {

return true;

}

}

"@

[System.Net.ServicePointManager]::CertificatePolicy = New-Object TrustAllCertsPolicy

$r_api = Invoke-WebRequest -Method Get -Uri "https://localhost:9398/api/"With the first request, we basically do a get request to the API page. The Veeam REST API uses XML in favor of JSON. So we can just convert the content itself to XML. Once that is done, we can browse the XML. The cool thing about Powershell is that it allows you to browse the structure and autocompletes. Just execute $r_api_xml and you will get the root element. By adding a dot and the start-element, you can see what's underneath this node. You can repeat this process to "explore" the XML (or you can just print out the $r_api.Content without conversion to see the plain XML).

$r_api_xml = [xml]$r_api.Content

$r_api_links = @($r_api_xml.EnterpriseManager.SupportedVersions.SupportedVersion | ? { $_.Name -eq "v1_2" })[0].Links

Under the root container (EnterpriseManager), we have a list of all SupportedVersion. By applying a filter we get the v1_2 (v9) API version. This one has one Link which indicates how you can logon

PS C:\Users\Timothy> $r_api_links.Link | flSo the Href show the link we have to follow. The type tells use the name of the link and then finally, Rel explains us which http method we should use. Create means we need to do a Post.

Href : https://localhost:9398/api/sessionMngr/?v=v1_2

Type : LogonSession

Rel : Create

Most of the times:

- get method is if you want to get details but don't want do a real action.

- post method is used if you want to do a real action

- put method if you want to update

- delete method if you want to destroy something

Ok for authentication, we have to do something special, we need to send the credentials via basic authentication. This is a pure HTTP standard, so I'll show you two ways to do it

$r_login = Invoke-WebRequest -method Post -Uri $r_api_links.Link.Href -Credential (Get-Credential -Message "Basic Auth" -UserName "rest")Well the first method uses Powershell built-in functionality for doing BASIC authentication. The second method, actually shows what is really going on in the HTTP request. "username:password" is encoded as base64. then "Basic " and this encoded string are concatenated. This result is then set in the Authorization header of the request.

#even more raw

$auth = "Basic " + [System.Convert]::ToBase64String([System.Text.Encoding]::UTF8.GetBytes("mylogin:myadvancedpassword"))

$r_login = Invoke-WebRequest -method Post -Uri $r_api_links.Link.Href -Headers @{"Authorization"=$auth}

The result is that (if we logged on successfully), we get a logon session, which has links to almost all main resources. Before we go any farther, we do need to analyze a bit the response.

if ($r_login.StatusCode -lt 400) {If you do a call, you can check the StatusCode or return code. You are expecting a number between 200-204 which means success. If you want to know the exact meaning of the HTTP return code in the Veeam REST API : https://helpcenter.veeam.com/backup/rest/http_response_codes.html

The next thing now is to extract the Rest session id. Instead of sending over the username and password the whole time, you need to send over this header to authenticate. The header is returned after you succesfully logged in.

#get session id which we need to do subsequent requestSo now we have the session id extracted, lets do something useful

$sessionheadername = "X-RestSvcSessionId"

$sessionid = $r_login.Headers[$sessionheadername]

#contentFirst we take the logon session, convert it to XML and we browse the links. We are looking for the link with name "JobReferenceList". Let's follow this link. In the process, don't forget to configure in your header the session id.

$r_login_xml = [xml]$r_login.Content

$r_login_links = $r_login_xml.LogonSession.Links.Link

$joblink = $r_login_links | ? { $_.Type -eq "JobReferenceList" }

#get jobs with id we haveSo the first line is just getting and converting the XML. The page which we requested is a list of a the job in reference format. The reference format is a compacted way of representing the object that you requested, basically showing the name and the ID of the job and some links. If you add the "?format=Entity" to such a list (or request of an object/objectlist). You get the full details of the job.

$r_jobs = Invoke-WebRequest -Method Get -Headers @{$sessionheadername=$sessionid} -Uri $joblink.Href

$r_jobs_xml = [xml]$r_jobs.Content

$r_job = $r_jobs_xml.EntityReferences.Ref | ? { $_.Name -Match "myjob" }

$r_job_alt = $r_job.Links.Link | ? { $_.Rel -eq "Alternate" }

So why the reference representation? Well it is a pretty similar concept to the GUI. If you open the Backup & Replication GUI and you select the job list, you don't get the complete details of all the jobs. That would be kind of overwhelming. But when you click a specific job and try to edit it, you get all the details. Similar, if you want to built an overview of all the jobs, you wouldn't want the API to give you all the unnecessary details as this would make the "processing time" of the request much bigger (downloading the data, parsing it, extracting what you need, ..)

So in the 3rd line, what we do is look for the job (or rather the reference to a job), which names matches "myjob". We then take a look at the links of this job and look for the alternate link. Basically, this is the jobid + "?format=Entity" to get the complete details of the job. Here is the the output of $r_job_alt

PS C:\Users\Timothy> $r_job_alt | flNow ask for the details of the job

Href : https://localhost:9398/api/jobs/f7d731be-53f7-40ca-9c45-cbdaf29e2d99?format=Entity

Name : myjob

Type : Job

Rel : Alternate

$r_job_entity = Invoke-WebRequest -Method Get -Headers @{$sessionheadername=$sessionid} -Uri $r_job_alt.HrefBy now, the first 2 lines should be well understood. In the third line we are looking for a link on this object with name start. This is basically the method we need to execute to start the job. Start is a real action and if you look it up, you will see that you need a POST method it to call it

$r_job_entity_xml = [xml]$r_job_entity.Content

$r_job_start = $r_job_entity_xml.Job.Links.Link | ? { $_.Rel -eq "Start" }

#start the jobOk so that a bunch of code, but still wanted to post it. So first we follow the start method and parse the XML. The result is actually a "Task". A Task in the end is representing a process that is running on the RESTful api, that you can refresh, to check the actual status of the process. What is important, it is the REST process but not the Backup Server process. That means that if a task is finished for REST, it doesn't mean necessarily that the action at the backup server is finished. What is finished is that the API has passed your command to the backup server.

$r_start = Invoke-WebRequest -Method Post -Headers @{$sessionheadername=$sessionid} -Uri $r_job_start.Href

$r_start_xml = [xml]$r_start.Content

#check of command is succesfully delegated

while ( $r_start_xml.Task.State -eq "Running") {

$r_start = Invoke-WebRequest -Method Get -Headers @{$sessionheadername=$sessionid} -Uri $r_start_xml.Task.Href

$r_start_xml = [xml]$r_start.Content

write-host $r_start_xml.Task.State

Start-Sleep -Seconds 1

}

write-host $r_start_xml.Task.Result

So in this example, we check if the task is "Running", we will refresh the task and write out the State, sleep 1 second, and then do the whole thing again while it is in "Running" state. Once it is finished, we write out the Task.Result. Again, if this task is Finished, it does not mean the job is finished, but the backup server has "started" the job hopefully succefully

So finally we need to log out. That rather easy. In the logon session, you will find the URL to do so. Since you are logging out or deleting your session, you need to use the method "DELETE". You can check that by checking the relationship of the link.

$logofflink = $r_login_xml.LogonSession.Links.Link | ? { $_.type -match "LogonSession" }I have uploaded the complete code here:

Invoke-WebRequest -Method Delete -Headers @{$sessionheadername=$sessionid} -Uri $logofflink.Href

https://github.com/tdewin/veeampowershell/blob/master/restdemo.ps1

There is some more code in that example that does the actual follow up on the process. But you can skip or analyze the code if you want.